This question, which borders on the philosophical, is more relevant than ever in the world of AI. OpenAI has been promising that its next model will greatly improve reasoning, and there are many theories floating around about Q*, Strawberry, Orion... But beyond the hype, the fundamental idea comes from one of the key prompting techniques: "Let's think step by step."

To get a quality response from a large language model (LLM) or improve its accuracy, it's crucial to provide the context and define the task as specifically as possible—without ambiguity. This helps guide the LLM in the right direction. If there are gaps or ambiguities in the context, the LLM will have to fill them in statistically, which increases the risk of error with each prediction (or token generation).

Why "Step-by-Step Thinking" improves responses

What does "Let's think step by step" do?

It prompts the model to reason through the problem before giving a final answer. This isn't exactly the same as "pausing to think" and coming to a conclusion, because “the think process” will be equally generated in the response.

In a way, we're asking the LLM to generate high-quality context and instructions before producing an answer. This means that by the time the final response is ready, it will be based on better information, leading to an improved answer.

"Let's think step by step" could be seen as a self-contained version of a more fundamental prompting technique—"Reflection"—which asks the model to analyze its previous response, propose improvements, and apply them. These techniques aren't mutually exclusive, and more advanced LLMs already have some form of "Let's think step by step" built in.

This chain of reasoning steps is called Chain-of-Thought, and there are various methods and techniques to leverage it. While it's easy to use in prompting, these techniques are now being applied on a larger scale in training new models.

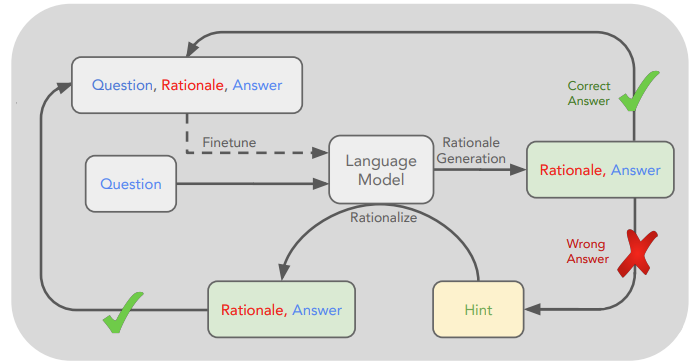

In the paper STaR: Self-Taught Reasoner Bootstrapping Reasoning With Reasoning, a technique is proposed where the model reasons through its answers, and the correct responses (along with their reasoning) are selected for retraining.

This process is repeated iteratively, with the aim of training the model to generate better reasoning, which indirectly leads to more correct answers.

Open-Source Challenges Big Tech’s

It's believed that Big Tech companies are using these and other techniques to scale up their models. But while they're burning through GPUs at an incredible rate, Matt Shumer has surprised everyone by releasing "Reflection," a 70B open-source model that outperforms private state-of-the-art models like GPT-4o or Claude 3.5 Sonnet.

This Llama 3.1 70B model specializes in performing Reflection before generating its final answer. In simpler terms, it produces better responses by internally generating the necessary reasoning to arrive at the best answer.

This unexpected launch is bound to shake things up, and it will likely force Big Tech to make a move. For now, their main value proposition seems to be execution at scale (which, to be fair, is critical).

From Wow Moments to Reality

I remember reading about the prompting technique "Reflection" when ChatGPT first came out. It was one of those "WOW" moments. It's exciting to see something so conceptually simple gaining traction.

Likewise, it also made me think about the original question: "What is thinking?" and popular phrases like "Think twice" or techniques like "The 5 Whys."

While we idealize thinking as something intangible and uniquely human, perhaps it could be defined as the ability to imagine different scenarios to solve a problem. These imagined solutions lead to further improvement or new, better solutions. On the scale of the human brain, this is what we might call tree-like or rhizomatic thinking.

Will we be able to create something similar to rhizomatic thinking in machines?

For now, these techniques are more about selecting the correct pre-existing answer. But at scale, could we jump to the discovery or creation of new synthetic knowledge?

Very interesting questions, but ones I doubt an LLM can answer—yet ;-)

And you, have you taken a moment to think?

References:

STaR: Self-Taught Reasoner Bootstrapping Reasoning With Reasoning: https://proceedings.neurips.cc/paper_files/paper/2022/file/639a9a172c044fbb64175b5fad42e9a5-Paper-Conference.pdf

Reflection model launch by Matt Shumer: https://x.com/mattshumer_/status/1831767014341538166

Rhizomatic Thinking: https://en.wikipedia.org/wiki/Rhizome_(philosophy)

Five Whys method: https://en.wikipedia.org/wiki/Five_whys

Ok, this post was written last Friday and scheduled for today and... it rained a lot, haha.

This weekend, the AI community rushed to validate Matt's model, and it's very unclear that the Reflection technique is so effective through a dataset and fine-tuning.

In any case, I still trust Matt, but worked or not, this technique is indeed useful on system or normal prompting, also the concept to find ways to let the models invest more computation on better rationales.

Let's see what happens :popcorns:

As a former phylosofy student I expected you to delve into the technical terms definition and then grounding it on your area of expertise.

If one day you try to delve into this theoretical jungle, I'll be the one bringing popcorn 😂